How Can CTOs Implement AI Agents (Strategic Roadmap)

Before you start building an AI Agent, make sure your purpose and requirements are clear. Because, as a CTO, your responsibility is not just to “use AI,” but to architect scalable, secure, production-ready intelligent systems that deliver measurable business outcomes. In short, considering principles of building AI agents is essential.

Here's the checklist you can follow for building AI Agents:

- Define a clear business objective

- Choose the right architecture

- Implement RAG if required

- Add memory & tool orchestration

- Establish governance

- Design evaluation metrics

- Plan the scalability

CTOs searching “ How to build an AI agent?” typically want the following aspects:

1. A structured, production-ready roadmap

2. Architectural clarity

3. Technology stack decisions

4. Governance & security considerations

5. Scalability guidance

6. Cost vs ROI clarity

So, here's the step-by-step structure to build an AI Agent for CTOs

Step 1: Define the Business Objective (Not the Model)

Before selecting a large language model (LLM) or AI framework, clarify:

- What problem does the AI agent solve?

- Is it automating tasks, augmenting humans, or replacing manual workflows?

- What KPIs define success? (Accuracy, response time, cost reduction, revenue impact)

Example Enterprise Use Cases of Agentic AI

- AI customer support agent

- Internal knowledge assistant

- AI sales copilot

- Healthcare triage agent

- Financial risk monitoring agent

- DevOps automation agent

Your AI architecture should be problem-first, not model-first.

Step 2: Choose the Right AI Agent Architecture

AI agents typically follow one of these architectures:

1. Reactive Agents

2. Memory-Enabled Agents

3. Tool-Using Agents

4. Multi-Agent Systems

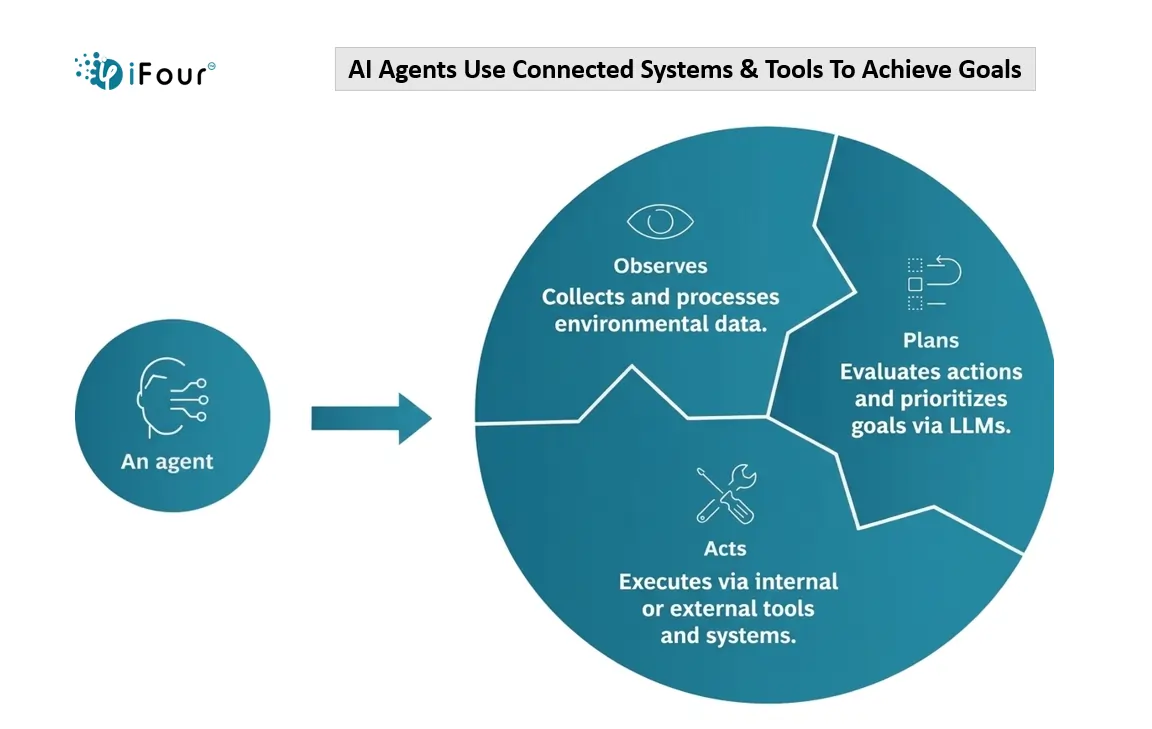

Step 3: Design the Core AI Agent Components

A production-ready AI agent includes the following layers:

1. Input Layer

-

User interface (chat, API, dashboard)

-

Event streams

-

Structured/unstructured data

2. Intelligence Layer

3. Memory Layer

-

Vector database

-

Session memory

-

Long-term knowledge base

4. Tool & Action Layer

-

REST APIs

-

Databases

-

CRM / ERP integrations

-

Workflow engines

5. Governance & Monitoring Layer

Enterprise AI agents fail when governance is ignored.

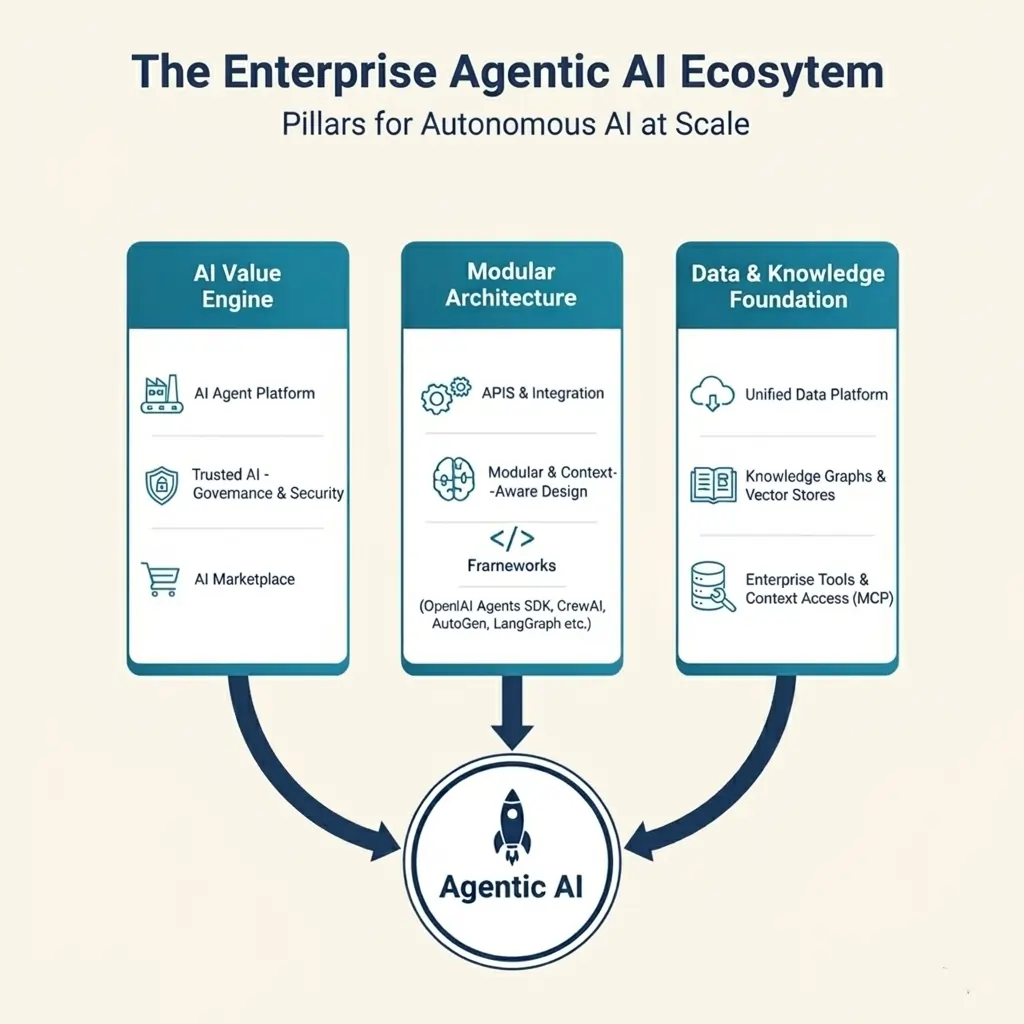

Step 4: Select the Technology Stack

I have published blog on PlusRadiology Site

AI Models

- Open-source LLMs

- Commercial LLM APIs

- Fine-tuned models

Frameworks for AI Agents

- LangChain

- Semantic Kernel

- AutoGen

- CrewAI

Infrastructure

- Cloud-native architecture

- Kubernetes orchestration

- Serverless compute

- GPU-based inference endpoints

Data Layer

- Vector databases

- Data lakes

- Knowledge graphs

For CTOs, stack selection must balance:

- Performance

- Cost

- Vendor lock-in risk

- Security

- Compliance

Step 5: Implement Retrieval-Augmented Generation (RAG)

If your AI agent needs domain-specific knowledge, RAG is essential.

RAG architecture includes:

1. Document ingestion pipeline

2. Embedding generation

3. Vector search

4. Context injection into LLM prompt

RAG improves:

- Factual accuracy

- Domain alignment

- Reduced hallucinations

- Data security

Without RAG, enterprise AI agents often provide generic answers.

Step 6: Establish Guardrails & AI Governance

AI governance is not optional. As a CTO, you must implement:

- Role-based access control

- Data encryption

- Prompt injection protection

- Toxicity filters

- Bias monitoring

- Audit trails

Consider regulatory compliance, such as:

Trust and explainability drive adoption.

Step 7: Test, Evaluate, and Optimize

AI agents require structured evaluation.

Testing Framework

- Unit testing for tool execution

- LLM response benchmarking

- Latency monitoring

- Hallucination rate analysis

- User feedback loop

Evaluation Metrics

- Task completion rate

- Accuracy score

- User satisfaction

- Cost per request

- Response time

Continuous optimization is mandatory.

Step 8: Deployment & Scaling Strategy

AI agent deployment should follow DevOps best practices:

- CI/CD pipelines

- Canary releases

- API rate limiting

- Horizontal scaling

- Observability dashboards

Plan for:

- Traffic spikes

- Model updates

- Versioning

- Failover mechanisms

Production AI systems must be resilient.

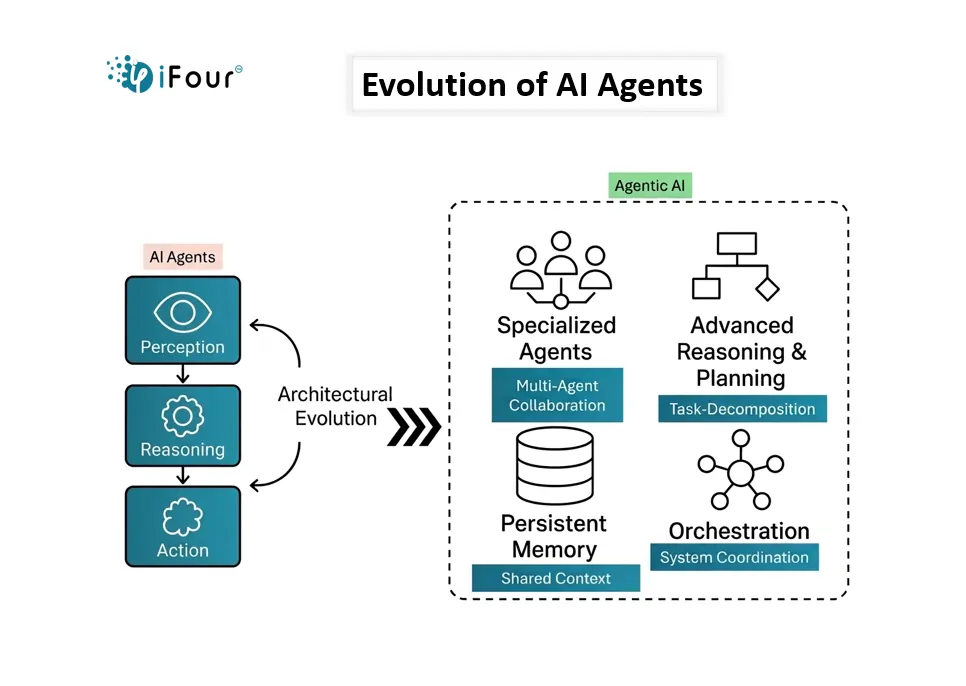

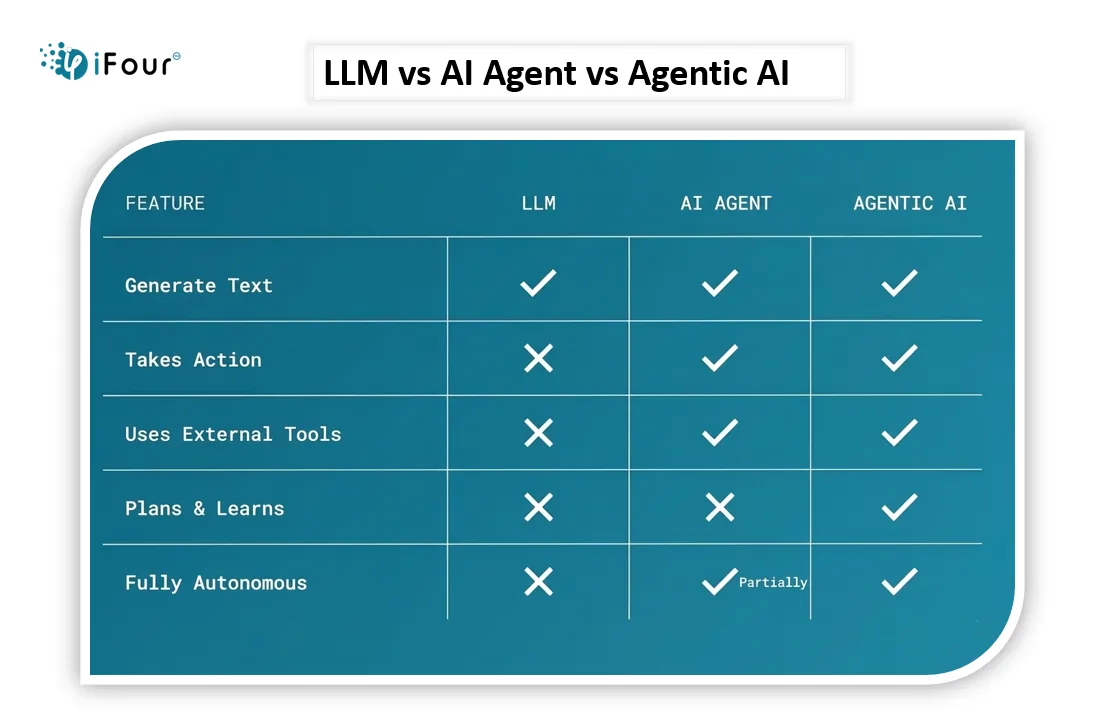

Meanwhile, check out the key differences between LLM, AI Agent, and Agentic AI in the following image.